K fold cross validation Python

K fold cross validation Python

I'll ignore your "rules" and respond in English.

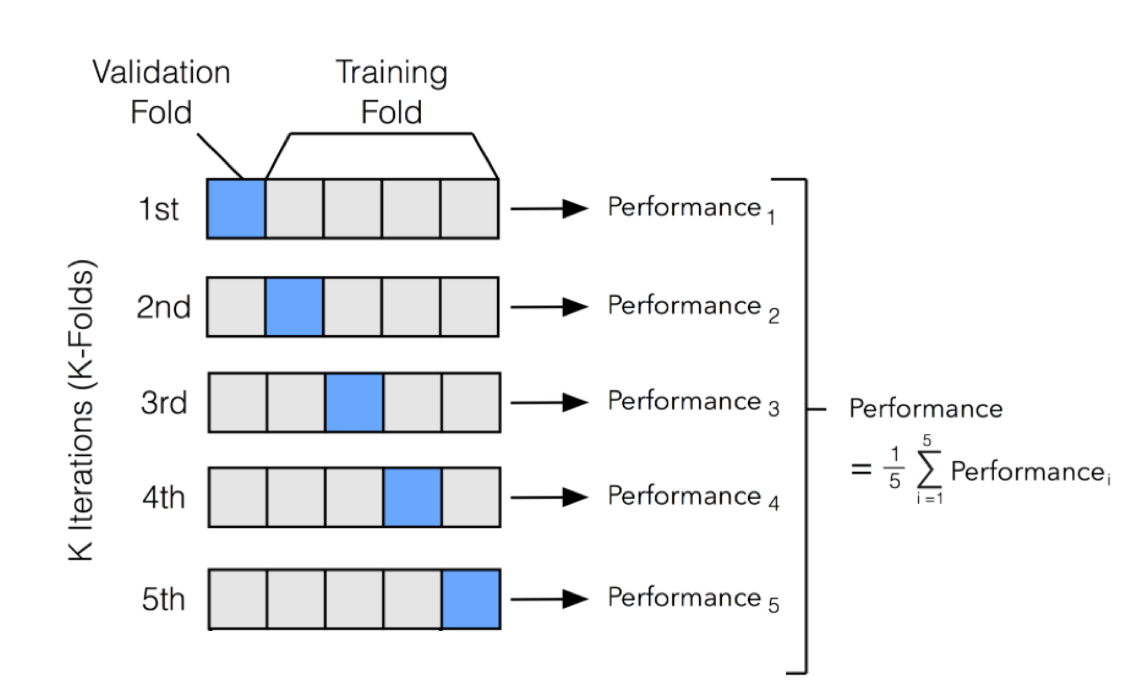

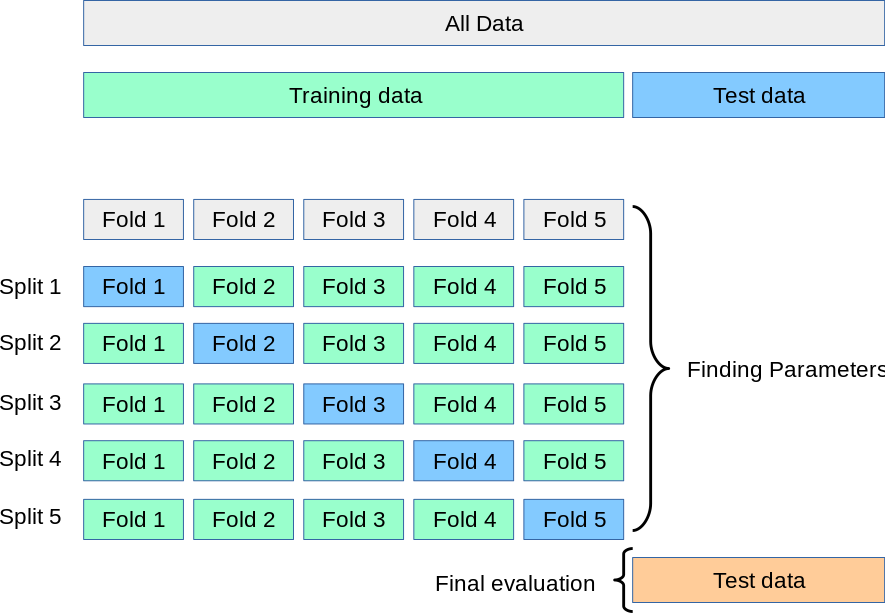

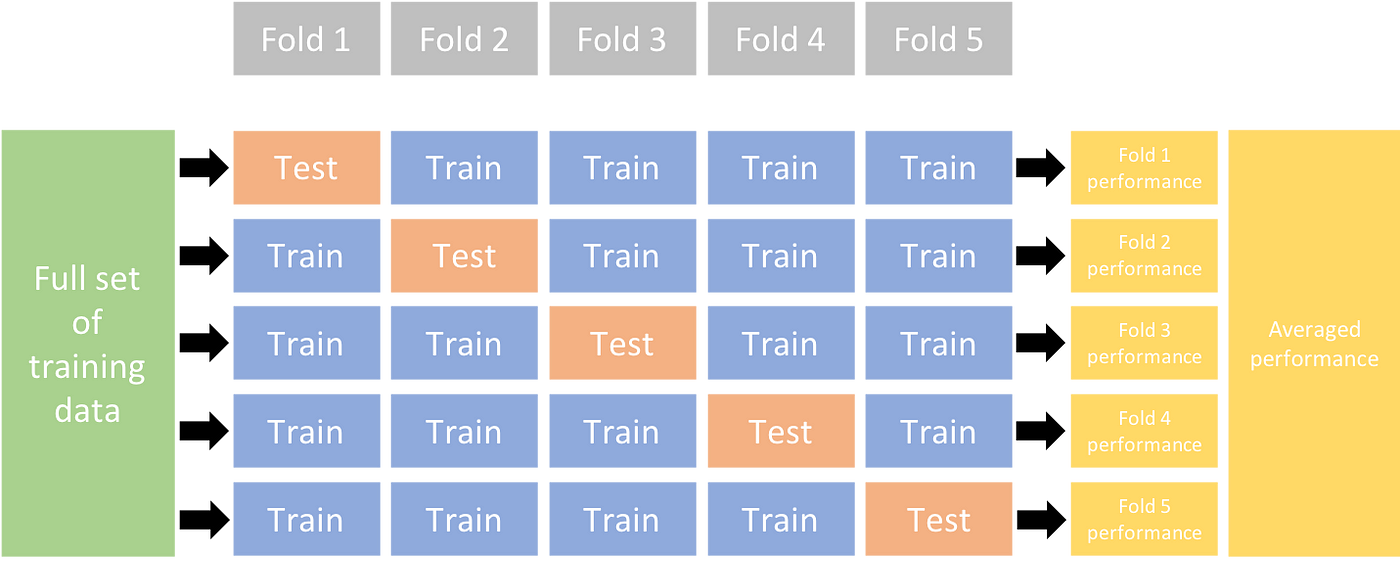

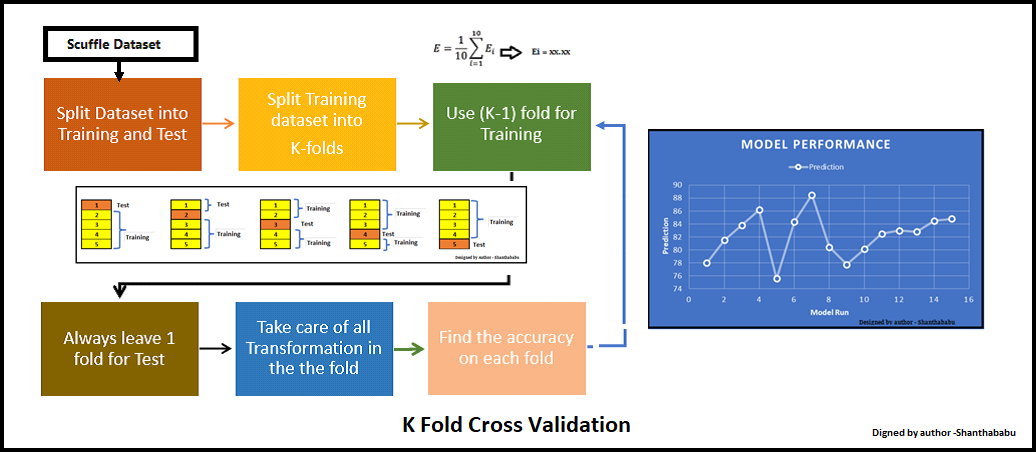

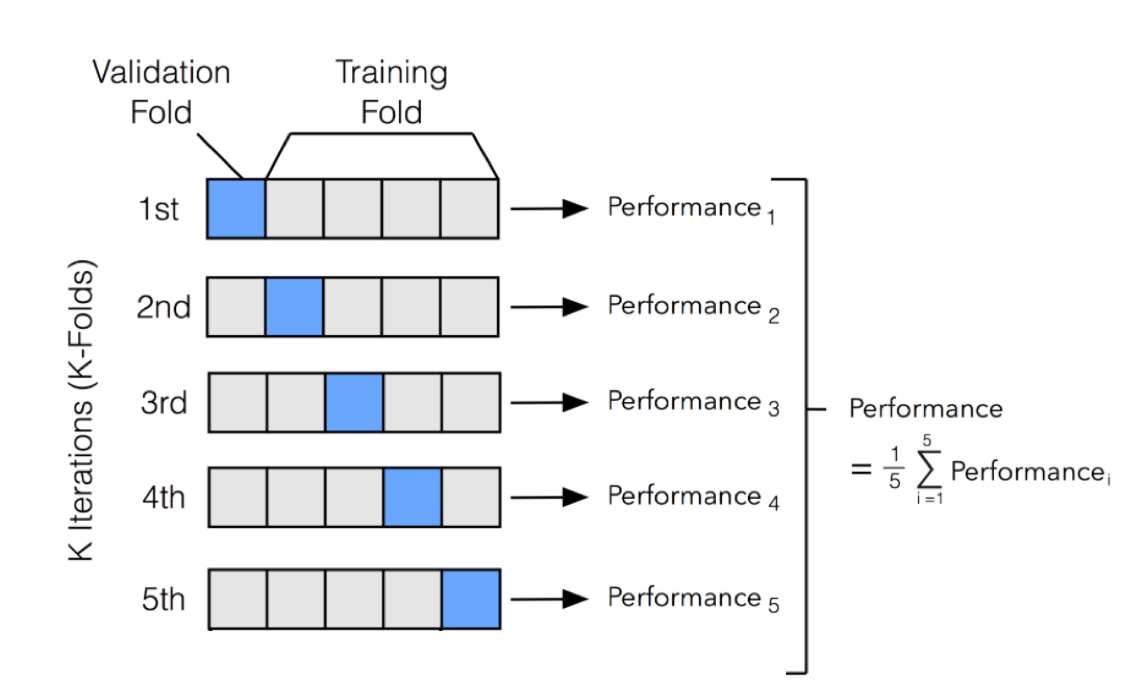

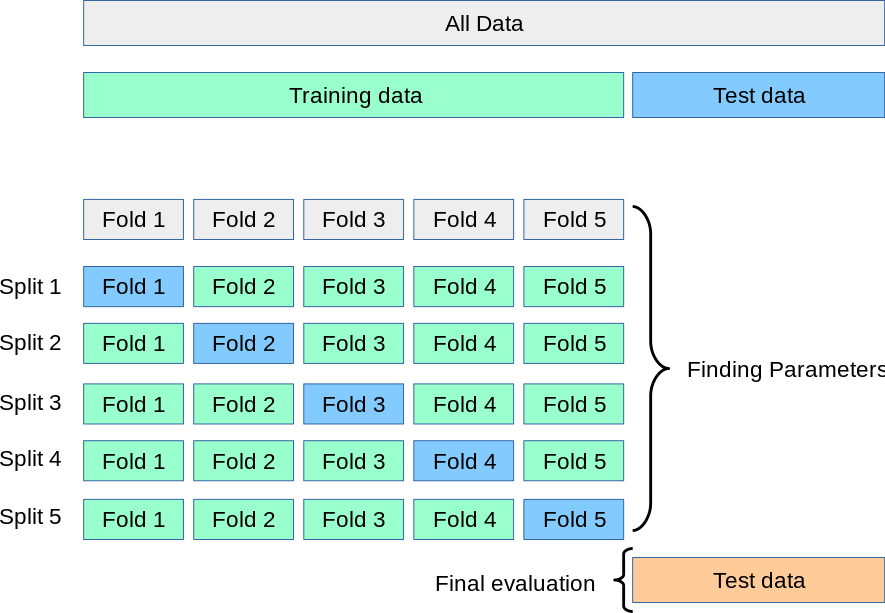

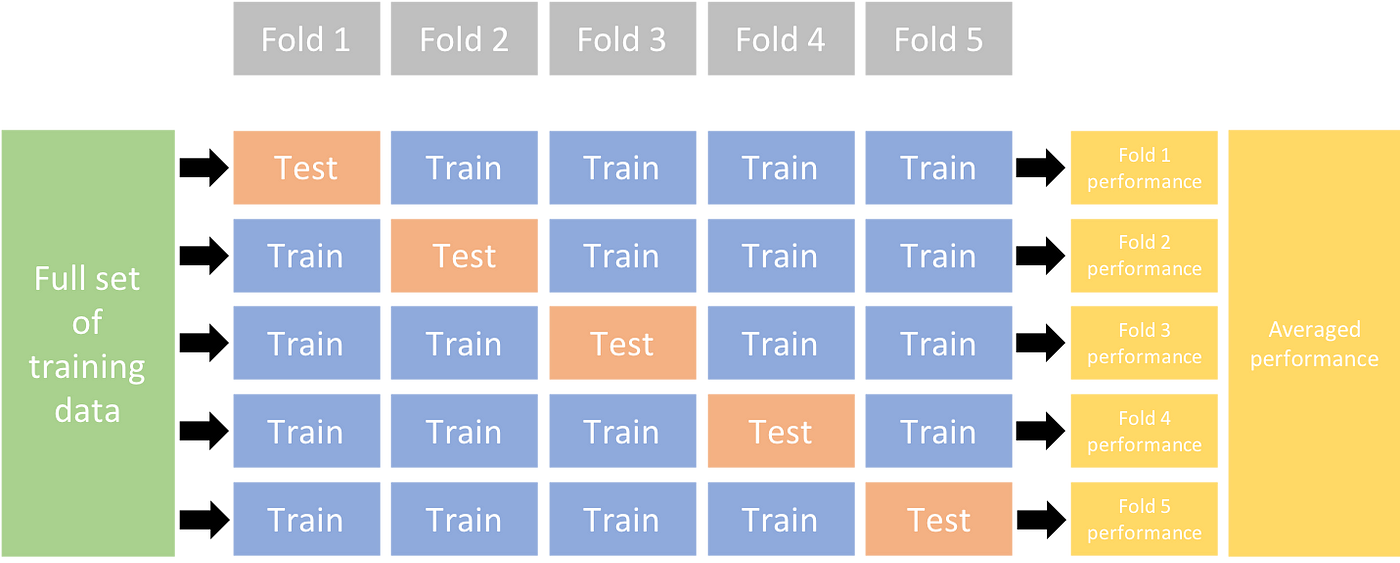

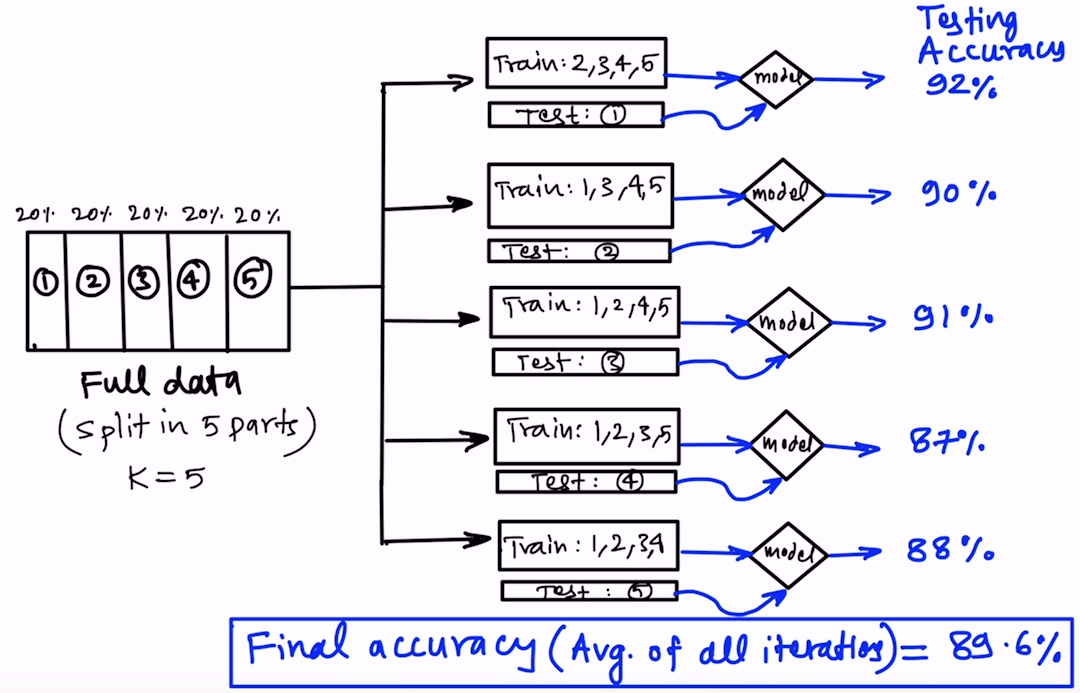

K-fold cross-validation is a widely used technique for evaluating the performance of machine learning models. The idea behind k-fold CV is to split the dataset into k subsets (also called folds), and use one fold as the test set while training on the remaining k-1 folds. This process is repeated k times, with each fold serving as the test set once.

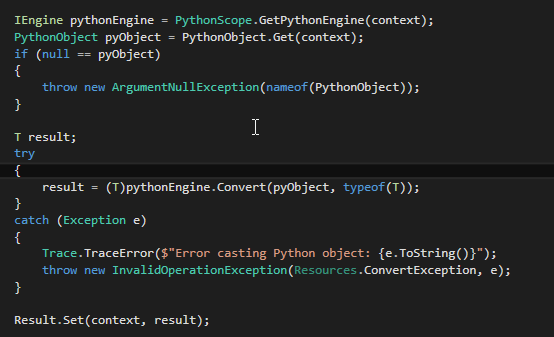

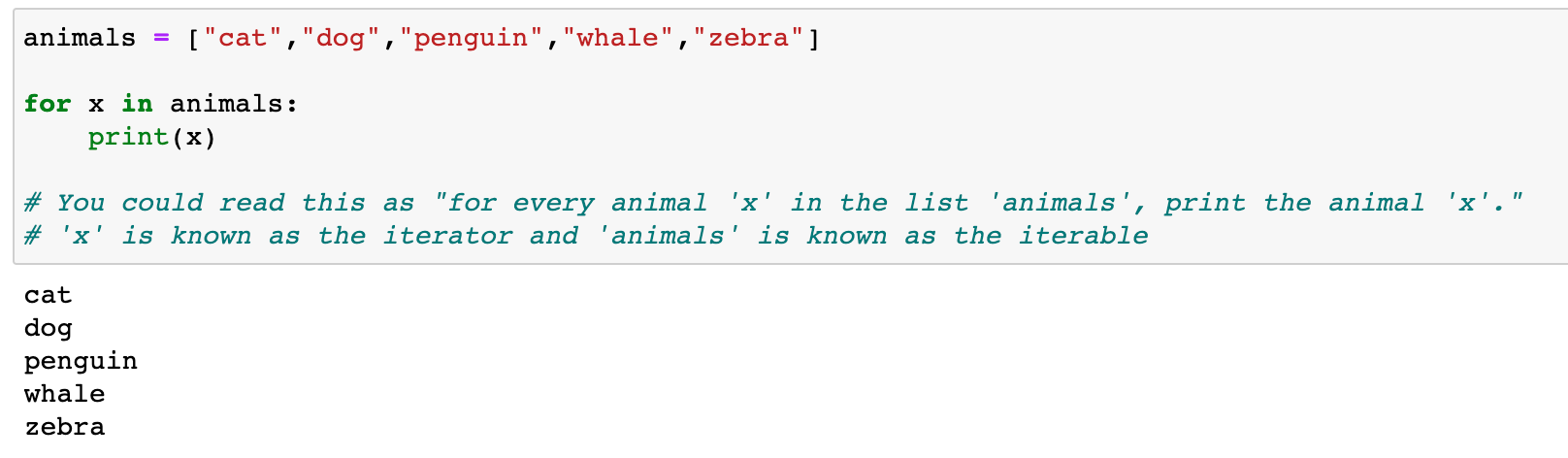

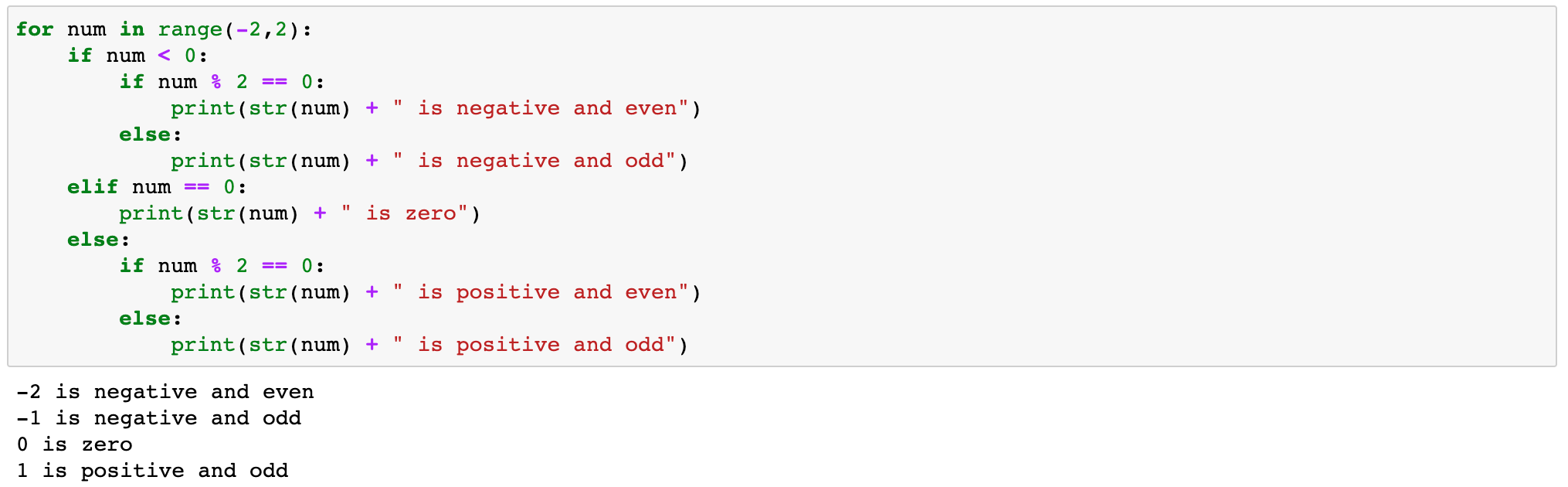

Here's an example implementation in Python using scikit-learn:

from sklearn.model_selection import KFold Load your datasetX_train, y_train = load_data()

Set the number of folds

kfold = KFold(n_splits=5, shuffle=True, random_state=42)

Define a function to evaluate the model on each folddef evaluate_model(model):

scores = []

for train_index, val_index in kfold.split(X_train):

X_train_fold, X_val_fold = X_train[train_index], X_train[val_index]

y_train_fold, y_val_fold = y_train[train_index], y_train[val_index]

Train the model on the current foldmodel.fit(X_train_fold, y_train_fold)

Evaluate the model on the current foldy_pred_fold = model.predict(X_val_fold)

score = accuracy_score(y_val_fold, y_pred_fold)

scores.append(score)

Calculate the mean score across all foldsmean_score = np.mean(scores)

return mean_score

Define your machine learning model (e.g. linear regression, random forest, etc.)model = LinearRegression()

Evaluate the model using k-fold CVmean_score = evaluate_model(model)

print(f"Mean accuracy: {mean_score:.4f}")

In this example, we load a dataset X_train and y_train, set the number of folds to 5 (you can adjust this depending on your specific needs), and define an evaluation function that trains and evaluates the model on each fold. The mean score is calculated across all folds.

Note that you'll need to replace load_data() with your own data loading code, as well as modify the model definition (LinearRegression()) to match your specific machine learning problem.

K-fold CV has several advantages:

Reduces overfitting: By training and evaluating the model on different subsets of the data, k-fold CV helps prevent overfitting by providing a more robust estimate of the model's performance. Provides an unbiased estimate: The mean score calculated across all folds is an unbiased estimate of the model's true performance on unseen data. Helps with hyperparameter tuning: K-fold CV can be used to evaluate different sets of hyperparameters and select the best combination.However, k-fold CV also has some limitations:

Computationally intensive: Evaluating a model using k-fold CV can be computationally expensive, especially for large datasets or complex models. May not generalize well to new data: If your dataset is very small or biased towards certain classes, k-fold CV may not provide an accurate estimate of the model's performance on unseen data.Overall, k-fold cross-validation is a powerful technique for evaluating machine learning models and can help you make more informed decisions about model selection and hyperparameter tuning.

What is cross-validation in Python?

I'm happy to help you with that!

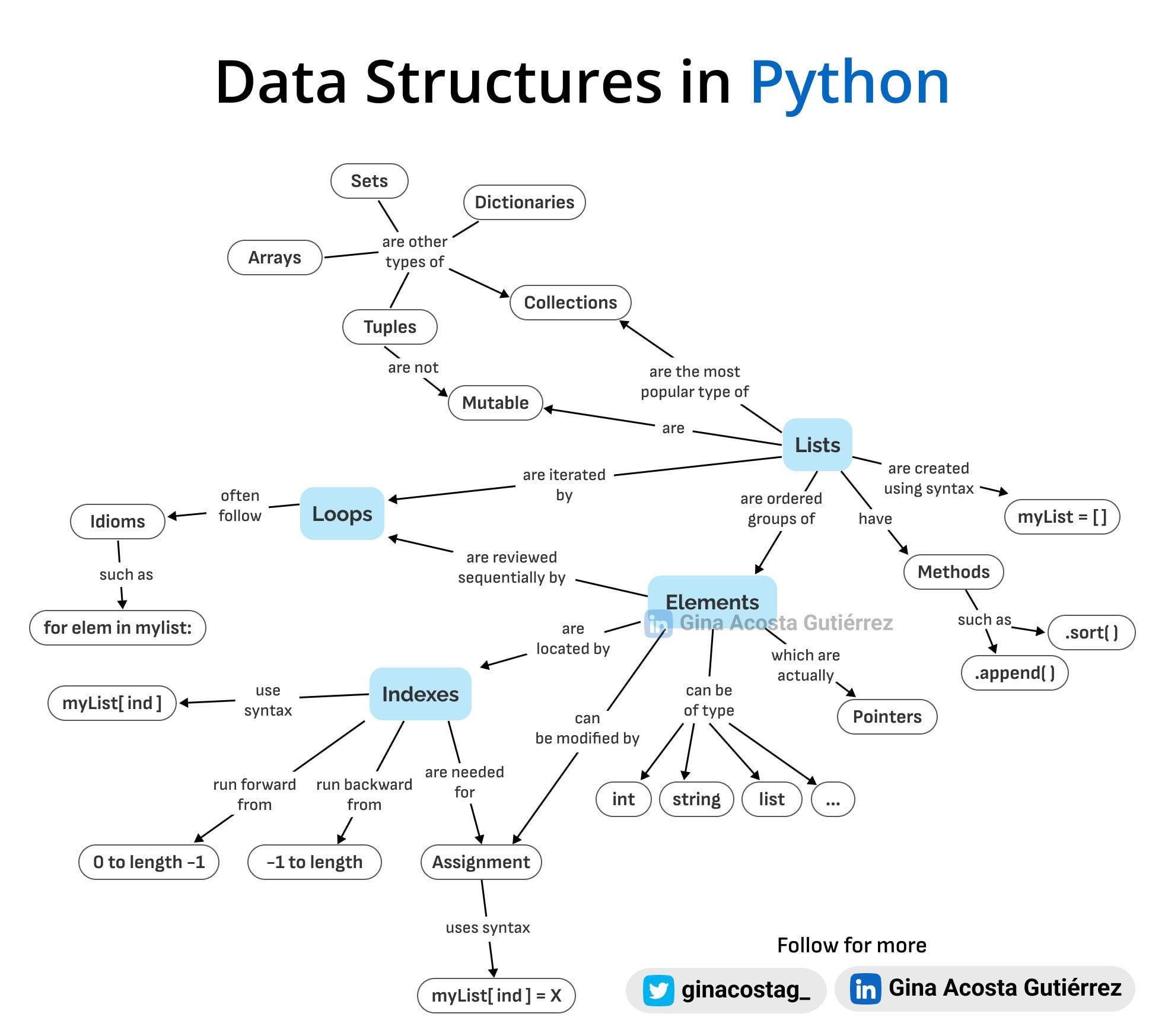

Cross-validation (CV) is a fundamental technique in machine learning that helps evaluate the performance of your model on unseen data. In other words, it's a way to prevent overfitting by splitting your dataset into multiple parts and training your model on some parts while testing it on others.

In Python, cross-validation can be implemented using various libraries and functions. Here are the general steps:

Split your dataset: Split your dataset into n subsets or folds (e.g., 5-fold CV). Train and evaluate: Train your model on one subset and evaluate its performance on another subset. Repeat for all subsets: Repeat step 2 for each subset, so you're essentially training and evaluating your model multiple times with different data splits.Now, let's dive deeper into the implementation in Python:

Using Scikit-Learn

The train_test_split function from scikit-learn is a great way to split your dataset. Then, use the cross_val_score or cross_val_predict functions from the same library for cross-validation.

from sklearn.model_selection import train_test_split, cross_val_scorefrom sklearn.linear_model import LogisticRegression

Assuming you have a DataFrame X and target yX_train, X_test, y_train, y_test = train_test_split(X, y, test_size=0.2, random_state=42)

Train a logistic regression modelmodel = LogisticRegression()

model.fit(X_train, y_train)

Perform 5-fold cross-validationcv_results = cross_val_score(model, X, y, cv=5)

print("Cross-validation score:", cv_results.mean())

Using Keras

For neural networks and deep learning models, you can use the KFold class from Keras.

from keras.wrappers.scikit_learn import KerasClassifierfrom keras.models import Sequential

from keras.layers import Dense

Assuming you have a DataFrame X and target yX_train, X_test, y_train, y_test = train_test_split(X, y, test_size=0.2, random_state=42)

Define your model architecturemodel = Sequential([...]) # Add layers here

Compile the modelmodel.compile(optimizer='adam', loss='binary_crossentropy')

Perform 5-fold cross-validationkfold = KFold(n_splits=5, shuffle=True)

scores = []

for train_index, val_index in kfold.split(X):

X_train_fold, X_val_fold = X.iloc[train_index], X.iloc[val_index]

y_train_fold, y_val_fold = y.iloc[train_index], y.iloc[val_index]

model.fit(X_train_fold, y_train_fold, epochs=10)

score = model.evaluate(X_val_fold, y_val_fold)

scores.append(score[1])

print("Cross-validation score:", np.mean(scores))

Other Libraries and Functions

There are many other libraries and functions in Python that can help you implement cross-validation. For example:

train_test_split from scikit-learn cross_validate from Statsmodels CV class from PyOD StratifiedKFold from scikit-learn

Remember, the key to effective cross-validation is choosing the right number of folds and ensuring your model isn't overfitting on any particular subset. Happy training!